📖 Project Introduction

InnoSpark is an advanced educational large language model independently developed by the School of Intelligence Education at East China Normal University and Shanghai Innovation Institute. It aims to explore the deep application of artificial intelligence technology in the field of education. Based on the domestic Qwen large language model with secondary pre-training, combined with subdomain fine-tuning and reinforcement learning for educational scenarios, we have launched InnoSpark-1.0.

🔗 Related Resources

📱 Main Products

- Homepage: InnoSpark Official

- RM Model: InnoSpark-HPC-RM-32B

- Educational Evaluation System: ELMES

🤖 Model Series

| Model Version | Parameters | Link |

|---|---|---|

| InnoSpark-min | 0.5B | 🔗 Download |

| InnoSpark-turbo | 7B | 🔗 Download |

| InnoSpark-plus | 72B | 🔗 Standard / 🔗 Inference |

📊 Datasets

- Model Scoring Dataset: HPC-LLM-8k

- Human Scoring Dataset: HPC-Human-8k

🚀 Quickstart

Here provides a code snippet with apply_chat_template to show you how to load the tokenizer and model and how to generate contents.

from transformers import AutoModelForCausalLM, AutoTokenizer

device = "cuda" # the device to load the model onto

model = AutoModelForCausalLM.from_pretrained(

"sii-research/InnoSpark-72B-0710",

torch_dtype="auto",

device_map="auto"

)

tokenizer = AutoTokenizer.from_pretrained("sii-research/InnoSpark-72B-0710")

prompt = "Introduce yourself in detail."

messages = [

{"role": "system", "content": "You are InnoSpark(启创), created by Shanghai Innovation Institute (上海创智学院) and East China Normal University(华东师范大学). You are a helpful assistant."},

{"role": "user", "content": prompt}

]

text = tokenizer.apply_chat_template(

messages,

tokenize=False,

add_generation_prompt=True

)

model_inputs = tokenizer([text], return_tensors="pt").to(device)

generated_ids = model.generate(

model_inputs.input_ids,

max_new_tokens=512

)

generated_ids = [

output_ids[len(input_ids):] for input_ids, output_ids in zip(model_inputs.input_ids, generated_ids)

]

response = tokenizer.batch_decode(generated_ids, skip_special_tokens=True)[0]

VLLM

We recommend deploying our model using 4 A100 GPUs. You can run the vllm server-side with the following code in terminal:

python -m vllm.entrypoints.openai.api_server --served-model-name InnoSpark --model path/to/InnoSpark --gpu-memory-utilization 0.98 --tensor-parallel-size 4 --port 6000

Then, you can use the following code to deploy client-side:

import requests

import json

def Innospark_stream(inputs,history):

url = 'http://loaclhost:6000/v1/chat/completions'

history+=[{"role": "user", "content": inputs},]

headers = {"User-Agent": "vLLM Client"}

pload = {

"model": "InnoSpark",

"stream": True,

"messages": history

}

response = requests.post(url,

headers=headers,

json=pload,

stream=True)

for chunk in response.iter_lines(chunk_size=1,

decode_unicode=False,

delimiter=b"\n"):

if chunk:

string_data = chunk.decode("utf-8")

try:

json_data = json.loads(string_data[6:])

delta_content = json_data["choices"][0]["delta"]["content"]

assistant_reply+=delta_content

yield delta_content

except KeyError as e:

delta_content = json_data["choices"][0]["delta"]["role"]

except json.JSONDecodeError as e:

history+=[{

"role": "assistant",

"content": assistant_reply,

"tool_calls": []

},]

delta_content='[DONE]'

assert '[DONE]'==chunk.decode("utf-8")[6:]

inputs='hi'

history=[]

for response_text in Innospark_stream(inputs,history):

print(response_text,end='')

🌟 Core Features

🎯 Open Source Product Matrix

1. 📚 InnoSpark Model Series

- 6 models with different parameter scales: min(0.5B), turbo(7B), plus(72B) and their corresponding inference model R versions

2. 🔍 ELMES Evaluation System

- Education Language Model Evaluation System

- Automated evaluation system for educational tasks

- Helps continuously optimize large model capabilities in teaching scenarios

3. 🛠️ COCLP Data Cleaning Pipeline

- Corpus Cleansing Pipeline

- Visual node-based framework based on ComfyUI

- Supports OCR, audio/video transcription, format conversion, PII removal, text filtering, and other functions

4. ⭐ HPC-RM Reward Model

- Helpful, Personalization, and Creativity Reward Model

- Provides scoring in three educational dimensions: helpfulness, personalization, and creativity

- Includes corresponding model scoring and human scoring datasets

📈 Performance Results

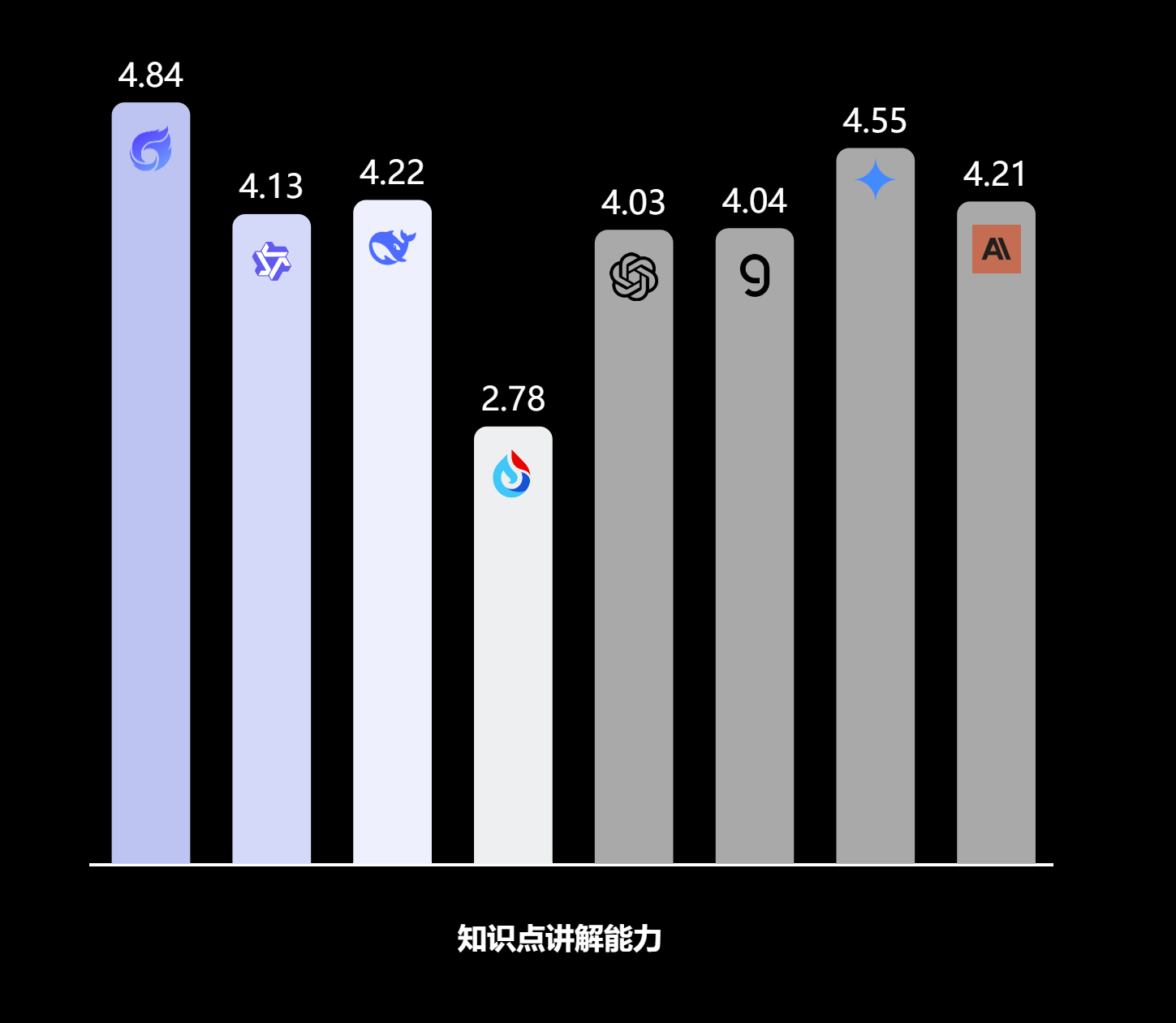

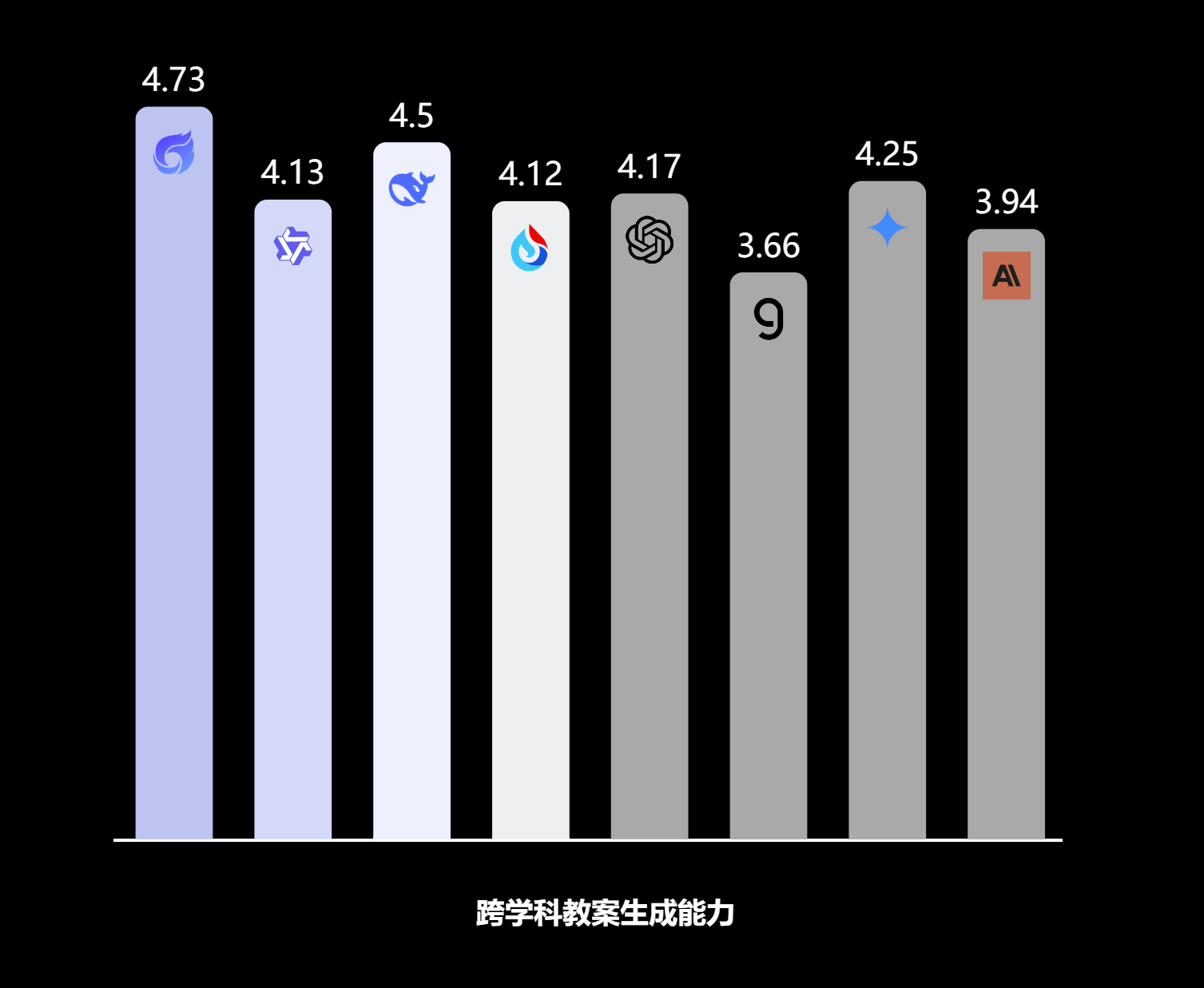

We achieved optimal performance in 4 key educational scenarios:

🏆 Evaluation Results

| Scenario | Performance |

|---|---|

| 📝 Knowledge Explanation |  |

| 🧭 Guided Problem Solving |  |

| 📚 Interdisciplinary Lesson Plans |  |

| 🎭 Contextual Question Generation |  |

🎨 Application Examples

| Scenario | Demo |

|---|---|

| 📖 Knowledge Explanation |  |

| 🎯 Guided Problem Solving |  |

| 🌟 Interdisciplinary Lesson Plans |  |

| 🎪 Contextual Question Generation |  |

🏛️ Technical Support

This project is jointly developed by the School of Intelligence Education at East China Normal University and Shanghai Innovation Institute. The reward model was trained using the Verl-Sii training framework provided by Shanghai Innovation Institute.

📄 License

Please refer to the relevant model pages for specific license information.