End of training

Browse files- README.md +2 -1

- all_results.json +16 -0

- train_results.json +16 -0

- trainer_state.json +0 -0

- training_loss.png +0 -0

README.md

CHANGED

|

@@ -4,6 +4,7 @@ license: apache-2.0

|

|

| 4 |

base_model: Qwen/Qwen3-8B

|

| 5 |

tags:

|

| 6 |

- llama-factory

|

|

|

|

| 7 |

- generated_from_trainer

|

| 8 |

model-index:

|

| 9 |

- name: glm-4_6-freelancer-traces-pm

|

|

@@ -15,7 +16,7 @@ should probably proofread and complete it, then remove this comment. -->

|

|

| 15 |

|

| 16 |

# glm-4_6-freelancer-traces-pm

|

| 17 |

|

| 18 |

-

This model is a fine-tuned version of [Qwen/Qwen3-8B](https://huggingface.co/Qwen/Qwen3-8B) on

|

| 19 |

|

| 20 |

## Model description

|

| 21 |

|

|

|

|

| 4 |

base_model: Qwen/Qwen3-8B

|

| 5 |

tags:

|

| 6 |

- llama-factory

|

| 7 |

+

- full

|

| 8 |

- generated_from_trainer

|

| 9 |

model-index:

|

| 10 |

- name: glm-4_6-freelancer-traces-pm

|

|

|

|

| 16 |

|

| 17 |

# glm-4_6-freelancer-traces-pm

|

| 18 |

|

| 19 |

+

This model is a fine-tuned version of [Qwen/Qwen3-8B](https://huggingface.co/Qwen/Qwen3-8B) on the DCAgent2/glm-4.6-freelancer-traces dataset.

|

| 20 |

|

| 21 |

## Model description

|

| 22 |

|

all_results.json

ADDED

|

@@ -0,0 +1,16 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"achieved_tflops_per_gpu": 1.757655959240902,

|

| 3 |

+

"achieved_tflops_per_gpu_theoretical": 145.67989620139622,

|

| 4 |

+

"epoch": 7.0,

|

| 5 |

+

"loss_nan_ranks": 0,

|

| 6 |

+

"loss_rank_avg": 0.2028736174106598,

|

| 7 |

+

"mfu_percent": 0.5633512689874686,

|

| 8 |

+

"mfu_percent_theoretical": 46.69227442352443,

|

| 9 |

+

"total_flos": 7.99498208344277e+17,

|

| 10 |

+

"train_loss": 0.28994313101414193,

|

| 11 |

+

"train_runtime": 56858.2694,

|

| 12 |

+

"train_samples_per_second": 0.684,

|

| 13 |

+

"train_steps_per_second": 0.043,

|

| 14 |

+

"valid_targets_mean": 2723.6,

|

| 15 |

+

"valid_targets_min": 1612

|

| 16 |

+

}

|

train_results.json

ADDED

|

@@ -0,0 +1,16 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"achieved_tflops_per_gpu": 1.757655959240902,

|

| 3 |

+

"achieved_tflops_per_gpu_theoretical": 145.67989620139622,

|

| 4 |

+

"epoch": 7.0,

|

| 5 |

+

"loss_nan_ranks": 0,

|

| 6 |

+

"loss_rank_avg": 0.2028736174106598,

|

| 7 |

+

"mfu_percent": 0.5633512689874686,

|

| 8 |

+

"mfu_percent_theoretical": 46.69227442352443,

|

| 9 |

+

"total_flos": 7.99498208344277e+17,

|

| 10 |

+

"train_loss": 0.28994313101414193,

|

| 11 |

+

"train_runtime": 56858.2694,

|

| 12 |

+

"train_samples_per_second": 0.684,

|

| 13 |

+

"train_steps_per_second": 0.043,

|

| 14 |

+

"valid_targets_mean": 2723.6,

|

| 15 |

+

"valid_targets_min": 1612

|

| 16 |

+

}

|

trainer_state.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

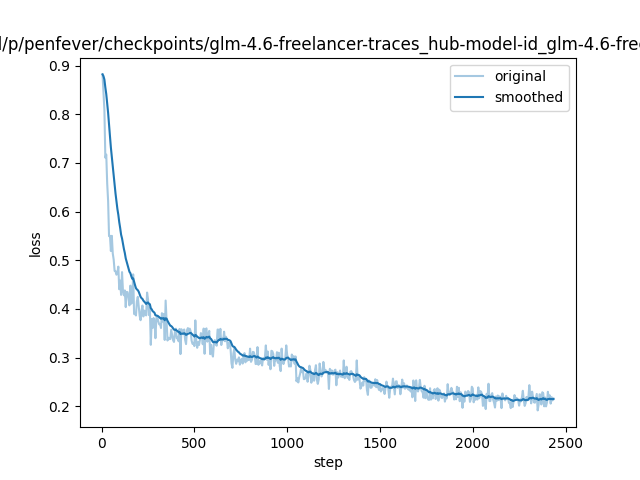

training_loss.png

ADDED

|